- Pondhouse Data OG - We know data & AI

- Posts

- Pondhouse Data AI - Tips & Tutorials for Data & AI 47

Pondhouse Data AI - Tips & Tutorials for Data & AI 47

DeepMind’s AI Agent Traps | Oracle’s Durable Agent Memory | Ollama in VS Code | Cursor Self-Hosted Coding Agents

Hey there,

This week’s edition is packed with cutting-edge developments in AI agent memory, secure coding workflows, and the latest research on agent vulnerabilities. We spotlight Oracle’s tutorial on durable AI agent memory, explore the seamless integration of Ollama with VS Code for local and cloud model access, and break down Google DeepMind’s “AI Agent Traps” framework for safeguarding agents against web-based manipulation. Plus, we cover the launch of Neo4j NODES AI 2026, Meta’s advances in robotics and self-optimizing agents, and practical tips for running secure, self-hosted AI agents with Cursor.

Let’s dive in!

Cheers, Andreas & Sascha

In today's edition:

📚 Tutorial of the Week: Building durable AI agent memory, Oracle

🛠️ Tool Spotlight: Ollama + VS Code integration overview

📰 Top News: DeepMind’s “AI Agent Traps” framework launched

💡 Tip: Run AI agents securely with Cursor

Let's get started!

Tutorial of the week

Building Durable AI Agent Memory with Oracle

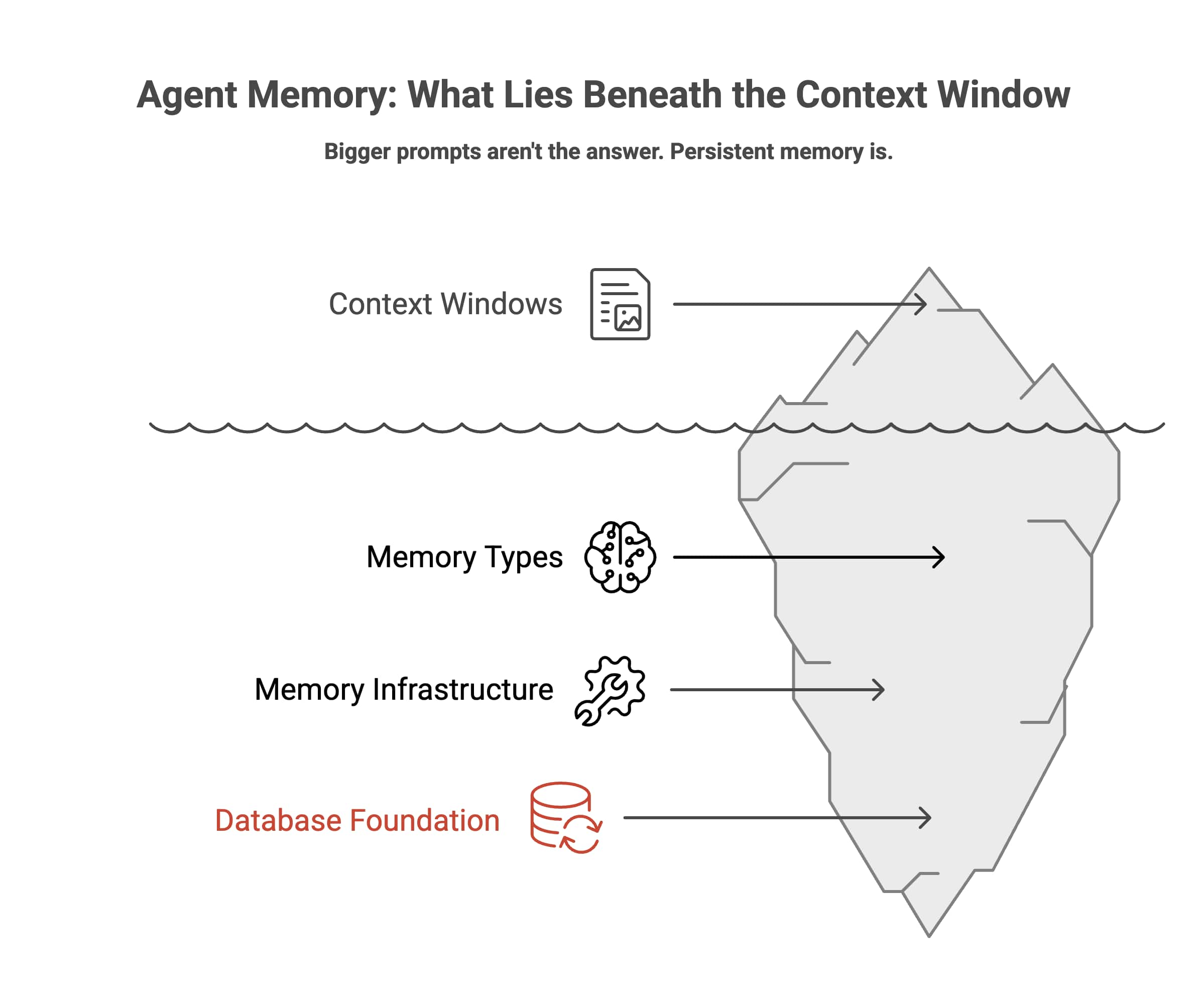

Ever wondered why your AI agents seem to forget everything between sessions? Oracle’s in-depth tutorial, Agent Memory: Why Your AI Has Amnesia and How to Fix It, tackles this critical challenge by guiding you through creating persistent, production-ready agent memory using LangChain and Oracle Database. This resource is a must-read for anyone designing AI agents that need to remember, adapt, and scale reliably.

Comprehensive breakdown of agent memory: Learn the four key memory types (working, procedural, semantic, episodic), why context windows aren’t enough, and how real memory systems work.

Hands-on code and architecture: Includes practical Python code snippets, setup instructions for Oracle’s vector store, and a full, runnable Jupyter notebook for memory engineering.

Production-grade solutions: Covers advanced topics like hybrid retrieval (vector, graph, relational), ACID transactions, security, compliance, and multi-tenancy—essentials for enterprise AI.

Open-source integrations: Explains how to leverage frameworks like LangChain, Letta (MemGPT), and Oracle’s own langchain-oracledb for seamless, scalable agent memory.

Who it’s for: AI engineers, data architects, and technical leaders building agents for customer service, RAG, or any application where memory continuity and reliability are non-negotiable.

If you’re ready to move beyond stateless chatbots and build agents that truly learn and remember, this tutorial is your blueprint.

Tool of the week

Ollama + VS Code — Local and Cloud AI Models in Your IDE

Ollama’s new integration with Visual Studio Code brings the power of local and cloud AI models directly into your development workflow. By connecting Ollama with GitHub Copilot Chat, developers can seamlessly select and run advanced language models—either locally for privacy or in the cloud for scale—without ever leaving their IDE.

Unified AI Model Access: Instantly switch between local Ollama models and cloud-based models within the Copilot Chat panel, offering flexibility for both privacy-focused and resource-intensive tasks.

Streamlined Setup: Launch the integration with a single command (

ollama launch vscode), or configure manually for granular control over model selection and usage.Enhanced Productivity: Leverage AI-assisted code generation, chat, and completion features directly in VS Code, accelerating development cycles and reducing context switching.

No Paid Account Required: Model selection works with GitHub Copilot Free, making advanced AI features accessible to a broader range of developers.

Model Variety: Choose from a growing catalog of models, including open-source and proprietary options, tailored to your specific coding needs.

Ollama’s VS Code integration is gaining traction among developers seeking flexible, privacy-conscious AI coding tools. For setup instructions and more details, see the official documentation.

Top News of the week

Google DeepMind Unveils "AI Agent Traps" to Combat Web-Based Manipulation Attacks

Google DeepMind has launched the "AI Agent Traps" framework, a groundbreaking initiative that exposes how web content can manipulate AI agent pipelines. This new framework categorizes a range of attacks—including content injection, semantic manipulation, cognitive state attacks, and behavioral control—that threaten the integrity of AI agents interacting with online data. The research highlights alarming success rates for prompt injection and memory poisoning, underscoring the urgent need for robust verification mechanisms in agent systems.

The AI Agent Traps framework systematically analyzes vulnerabilities in web-based AI workflows, revealing how malicious actors can exploit external content to steer agent behavior or compromise their memory. By documenting these attack vectors, DeepMind provides both a taxonomy and practical guidance for developers and researchers to safeguard their AI systems. The study also emphasizes that even advanced agents are susceptible to subtle manipulations, making external content verification a critical component of secure AI deployment.

This announcement has sparked important conversations in the AI community about the need for standardized defenses and proactive monitoring. As AI agents become more autonomous and integrated into web environments, the insights from DeepMind’s research will be essential for anyone building or deploying agent-based systems.

Also in the news

Neo4j NODES AI 2026: Pushing the Boundaries of Graph-Powered AI

Neo4j has announced the NODES AI 2026 virtual conference, scheduled for April 15, 2026. The event will feature over 40 technical sessions spanning knowledge graphs, GraphRAG, agentic systems, and memory architectures. With tracks on context graphs, graph-based memory, and production deployments, the conference aims to equip developers and data professionals with practical strategies for building context-aware, explainable, and adaptive AI systems. Attendees can expect deep dives, live demos, and insights from leading experts shaping the future of graph + AI integration.

Meta’s V-JEPA 2.1 Sets New Standard for Video and Robotics AI

Meta AI has released V-JEPA 2.1, a breakthrough self-supervised model for unified image and video understanding. Unlike previous approaches, V-JEPA 2.1 applies supervision to every patch across frames and layers, yielding dense, semantically rich features. This architecture enables state-of-the-art performance in tasks like robotic grasping (+20% success rate), navigation, depth estimation, and action anticipation. The model’s multi-modal tokenizer and scalable design make it a powerful foundation for robotics, video analytics, and embodied AI, with open-source code and pretrained models available for the research community.

ARC-AGI-3: Interactive Benchmark Highlights AI’s Reasoning Gaps

The ARC Prize Foundation has launched ARC-AGI-3, the first interactive reasoning benchmark designed to test AI agents’ ability to learn new tasks through exploration, not recall. Agents are placed in unfamiliar environments without instructions and must deduce rules and goals via trial and error. Results reveal a stark gap between human and AI learning: top AI models score under 1%, underscoring persistent challenges in adaptive reasoning, long-horizon planning, and flexible world modeling. ARC-AGI-3 provides a transparent, replayable platform for evaluating true general intelligence in both humans and machines.

Meta’s Hyperagents: Towards Self-Optimizing AI Systems

Researchers from Meta have introduced the Hyperagents framework, which unifies task-solving and meta-learning into a single editable agent. Unlike prior self-improving systems that rely on fixed meta-level mechanisms, Hyperagents allow both the agent and its self-improvement process to evolve. This enables open-ended, metacognitive self-modification, potentially accelerating autonomous progress across diverse domains. Early results show that DGM-Hyperagents outperform traditional baselines in adaptability and persistent learning, marking a step toward AI systems that can continually refine both their skills and their learning strategies.

Princeton Study: Energy Costs of AI Reasoning Models Under Scrutiny

A recent paper from Princeton University examines the energy footprint of modern AI reasoning models. The study finds that reasoning queries consume up to 79 times more energy per query than standard pattern-matching models, with a single query using 33 Wh versus 0.42 Wh for typical LLMs. The authors argue that smaller, domain-specialist models and knowledge graph-based approaches can drastically reduce inference costs, offering a sustainable path forward as AI adoption scales. The research highlights the need for energy-efficient, local AI systems and provides actionable recommendations for practitioners.

Tip of the week

Run AI Agents Securely with Cursor’s Self-Hosted Cloud Agents

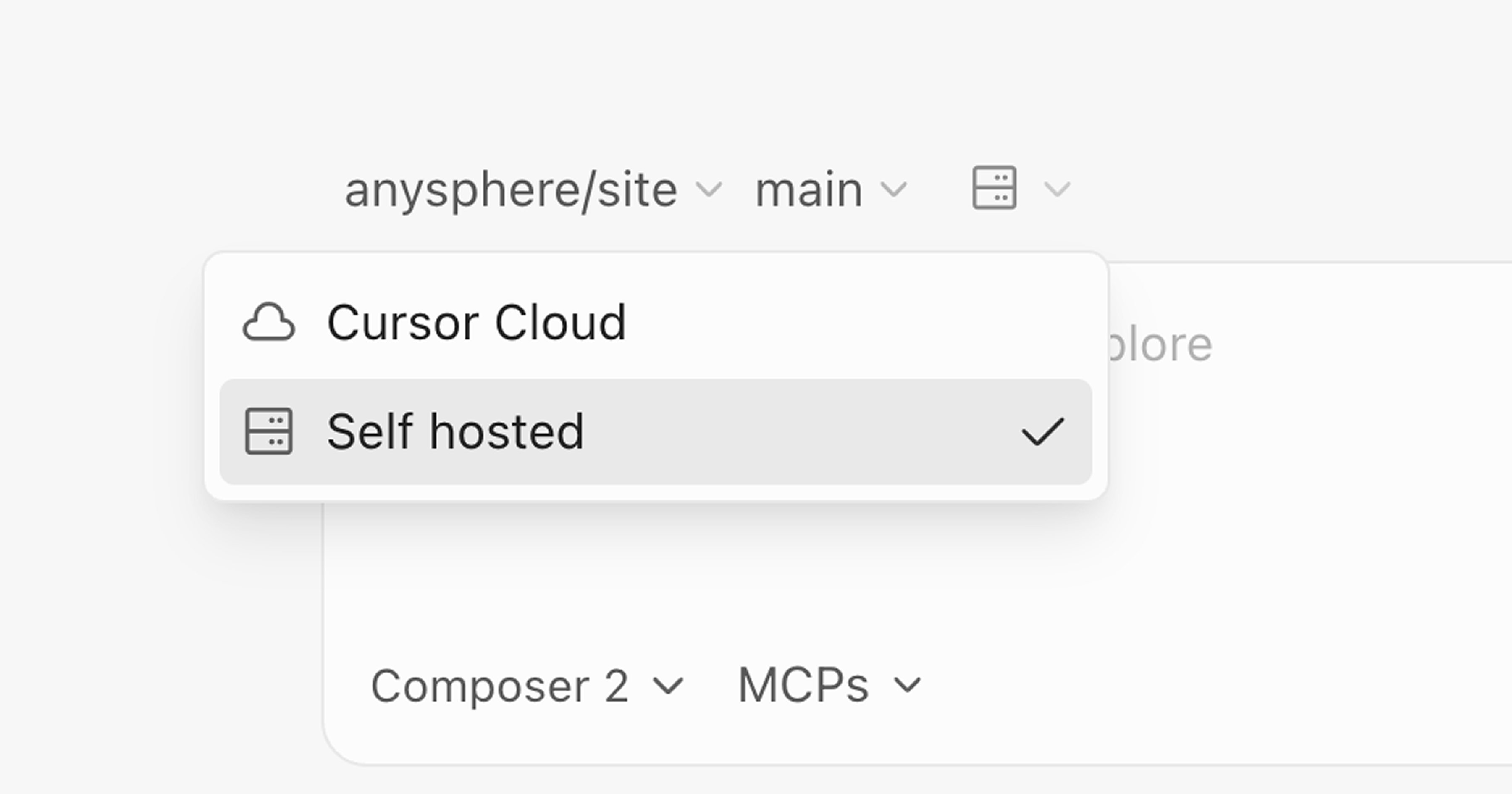

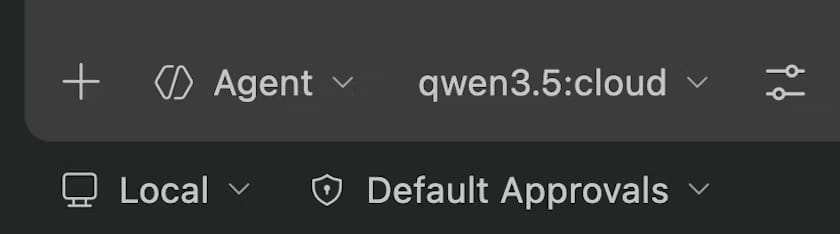

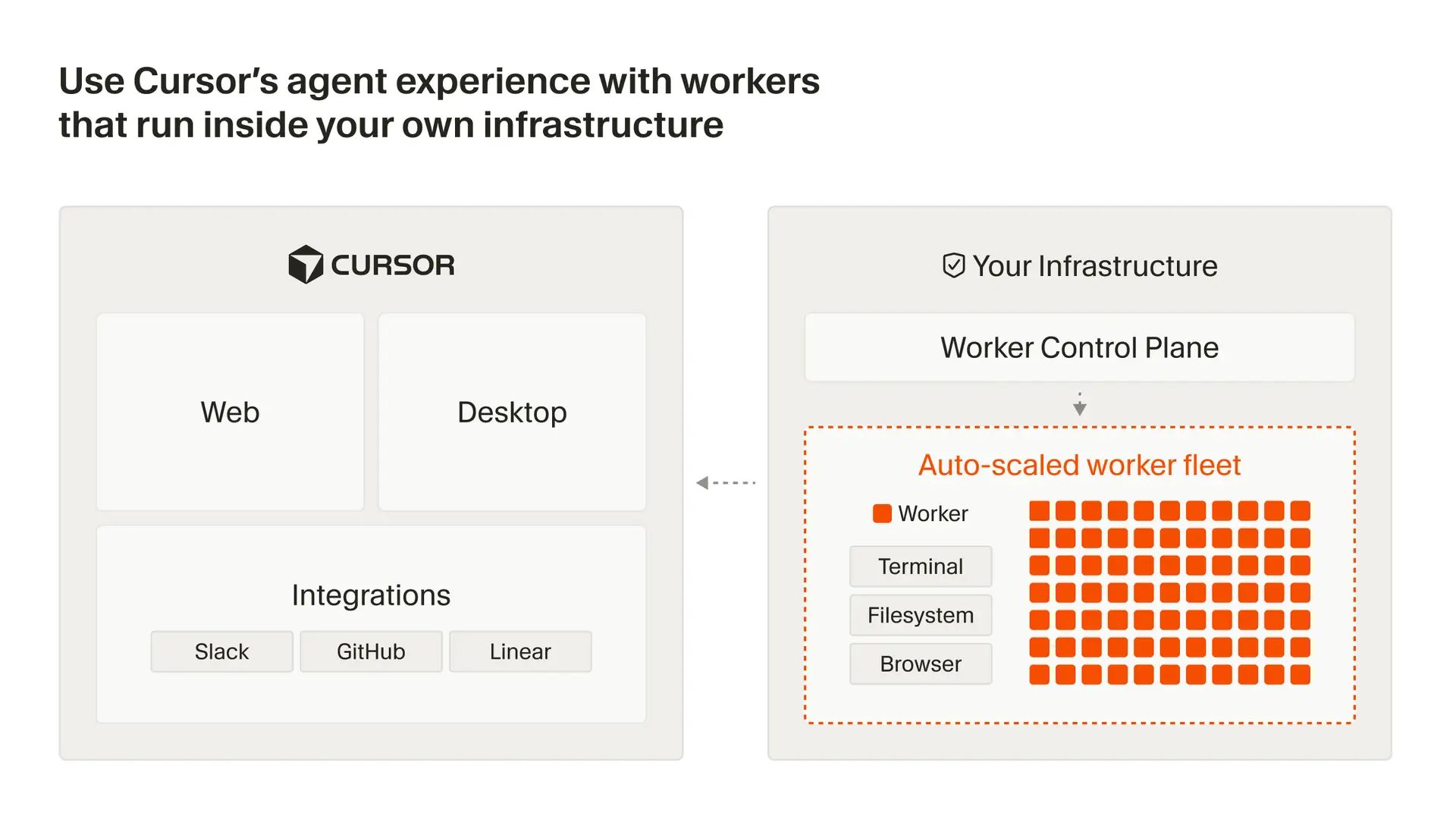

Worried about sensitive code or artifacts leaving your network when using AI-powered development tools? Cursor’s self-hosted cloud agents let you leverage AI automation while keeping everything inside your own infrastructure.

What it is: Cursor now supports self-hosted cloud agents, allowing you to run AI agents in isolated environments within your network. Your code, tool execution, and build artifacts never leave your infrastructure, addressing compliance and security concerns.

How to apply: Start a dedicated worker for each agent session using

agent worker start. For large-scale deployments, use Cursor’s Helm chart and Kubernetes operator to manage worker pools and autoscaling. Monitor and control agent runs via the Cursor Dashboard.Why it’s useful: Maintain your existing security model and internal setup. Agents can access internal caches, dependencies, and endpoints, just like a real engineer. No inbound ports or VPN tunnels required—workers connect outbound via HTTPS.

Key benefits: Reduce engineering overhead, parallelize development tasks, and ensure compliance for regulated industries. Easily extend agents with plugins, control permissions, and review agent output with logs, screenshots, and videos.

Ideal for teams needing secure, scalable AI-powered development workflows.

We hope you liked our newsletter and you stay tuned for the next edition. If you need help with your AI tasks and implementations - let us know. We are happy to help