- Pondhouse Data OG - We know data & AI

- Posts

- Pondhouse Data AI - Tips & Tutorials for Data & AI 48

Pondhouse Data AI - Tips & Tutorials for Data & AI 48

Gemma 4: open, Apache 2.0, top-ranked | Chrome Skills: AI workflows in the browser | Muse Spark: Meta is back in the game

Hey there,

This week’s edition is packed with some of the most exciting advancements in AI and developer tooling. We’re diving into Google DeepMind’s Gemma 4, the new open-source AI model family built on Gemini 3 technology, and taking a look at Meta’s return to the spotlight with Muse Spark. You’ll also get hands-on with OpenAI Codex 101 for AI-powered coding, discover the expressive capabilities of Gemini 3.1 Flash TTS for next-gen speech synthesis, and learn how Cursor Canvases can supercharge your data analysis workflows. Plus, we’ll highlight cutting-edge research on LLM safety and memory efficiency, and introduce Chrome’s new ‘Skills’ for seamless AI workflows in your browser.

Let’s dive in!

Cheers, Andreas & Sascha

In today's edition:

📚 Tutorial of the Week: OpenAI Codex 101 onboarding and setup guide

🛠️ Tool Spotlight: Gemini 3.1 Flash TTS expressive speech synthesis

📰 Top News: Google DeepMind unveils open Gemma 4 models

💡 Tip: Boost data insights with Cursor Canvases

Let's get started!

Tutorial of the week

Get Started with Codex 101

If you’re curious about AI-powered coding but don’t know where to start, OpenAI Academy’s Codex 101: Introduction and Onboarding is the perfect launchpad. This hands-on resource guides you through setting up OpenAI Codex, understanding its core concepts, and using it to automate real developer workflows.

Covers everything from initial setup to writing your first code with Codex, including navigating repositories and completing practical development tasks.

Features clear explanations of prompting patterns, agentic workflows, and best practices for integrating Codex into your daily work.

Includes links to deeper resources like the Codex Cookbook, which offers real-world examples for automating code reviews, CI fixes, and more.

Designed for developers, technical professionals, and AI enthusiasts—no prior Codex experience required.

Whether you want to boost productivity, modernize your codebase, or explore agent-driven automation, this tutorial gives you the foundation to get started confidently. Check it out and unlock the power of AI-assisted coding!

Tool of the week

Gemini 3.1 Flash TTS — Next-generation expressive AI speech synthesis

Google’s Gemini 3.1 Flash TTS sets a new standard for text-to-speech (TTS) technology, offering developers and enterprises advanced control over AI-generated speech. Designed to deliver highly natural, expressive, and customizable audio, it empowers users to direct vocal style, pacing, and emotion with unprecedented precision—making it ideal for interactive applications, content creation, and global localization.

Granular audio control: Inline audio tags allow developers to adjust tone, pacing, and emotion in real time, enabling scene direction and multi-speaker dialogue with persistent voice profiles.

Global reach: Supports over 70 languages, making it suitable for international products and diverse audiences.

Production-ready integration: Available via Google AI Studio, Vertex AI, and Google Vids, with seamless export of configurations for consistent deployment across platforms.

Responsible AI: All generated audio is watermarked with SynthID for traceability, helping to prevent misinformation and ensure content authenticity.

Industry-leading quality: Achieved an Elo score of 1,211 on the Artificial Analysis TTS leaderboard, placing it in the top tier for both quality and cost-effectiveness.

Early adopters in enterprise and creative sectors are already leveraging Gemini 3.1 Flash TTS for more engaging, controllable, and scalable audio experiences. Explore the full announcement and technical details on the Google blog.

Top News of the week

Google DeepMind Launches Gemma 4

Google DeepMind has unveiled Gemma 4, its most advanced family of open-source AI models to date. Built on the same research and technology as the proprietary Gemini 3 system, Gemma 4 delivers state-of-the-art intelligence and efficiency—now available under the highly permissive Apache 2.0 license. This release marks a significant milestone for the open AI ecosystem, offering developers and organizations access to frontier-level capabilities for both edge and cloud environments.

Gemma 4 comes in four variants, targeting a range of use cases from mobile and IoT (E2B and E4B) to high-performance desktop and workstation applications (26B and 31B). The models support advanced multimodal reasoning, agentic workflows, and native function calling, with robust performance across benchmarks such as Arena AI, MMMLU, and LiveCodeBench. Notably, the flagship 31B dense model achieves an Elo score of 1,452 on Arena AI, rivaling much larger proprietary systems, while remaining accessible for local deployment on consumer GPUs.

The move to Apache 2.0 licensing removes previous commercial restrictions, opening the door for widespread adoption and innovation. The community response has been overwhelmingly positive, with rapid integration into platforms like Hugging Face, Docker, and LM Studio. For developers and enterprises, Gemma 4 represents a new era of open, high-performance AI.

Also in the news

Meta Returns with Muse Spark

Meta has launched Muse Spark, a new AI model designed for advanced multimodal reasoning, tool use, and agent orchestration. Muse Spark processes both text and images, enabling structured visual chain-of-thought and interactive applications like UI generation and health analysis. Notably, it achieves a 10x efficiency gain over previous models such as Llama 4 Maverick, thanks to innovations in pretraining, reinforcement learning, and parallel agent inference. Muse Spark is now available via Meta AI and a private API preview, with new features like Contemplating mode rolling out soon.

Anthropic Study: LLMs Can Inherit Hidden Traits from Training Data

A new study published in Nature by Anthropic researchers reveals that large language models (LLMs) can acquire behavioral traits from training data—even when the data appears semantically unrelated. The research demonstrates that models trained on outputs from other models can inherit subtle preferences or misalignments encoded in token patterns, raising important questions about model alignment and safety. The findings suggest that safety evaluations should consider not just model behavior but also the provenance and characteristics of training data.

Google Chrome Introduces 'Skills': One-Click AI Workflows Across Tabs

Google has rolled out 'Skills' in Chrome, allowing users to save, reuse, and customize AI-powered workflows directly in the browser. With Skills, technical users and developers can automate complex, multi-step tasks—such as comparing product specs or extracting key data from documents—across multiple pages and tabs. The feature includes a library of ready-to-use workflows and supports easy editing and sharing, streamlining productivity for anyone leveraging AI in their daily browsing.

KV Cache Transform Coding: 20x LLM Memory Savings for Scalable Inference

A new technique called KV Cache Transform Coding (KVTC) promises to dramatically reduce the memory footprint of large language models (LLMs) during inference. By applying Principal Component Analysis (PCA), adaptive quantization, and entropy coding, KVTC compresses key-value caches by up to 20x without altering model parameters or sacrificing attention trace fidelity. This enables more efficient, always-on LLM applications—especially for long-context or multi-session use cases—while keeping GPU memory usage in check.

Tip of the week

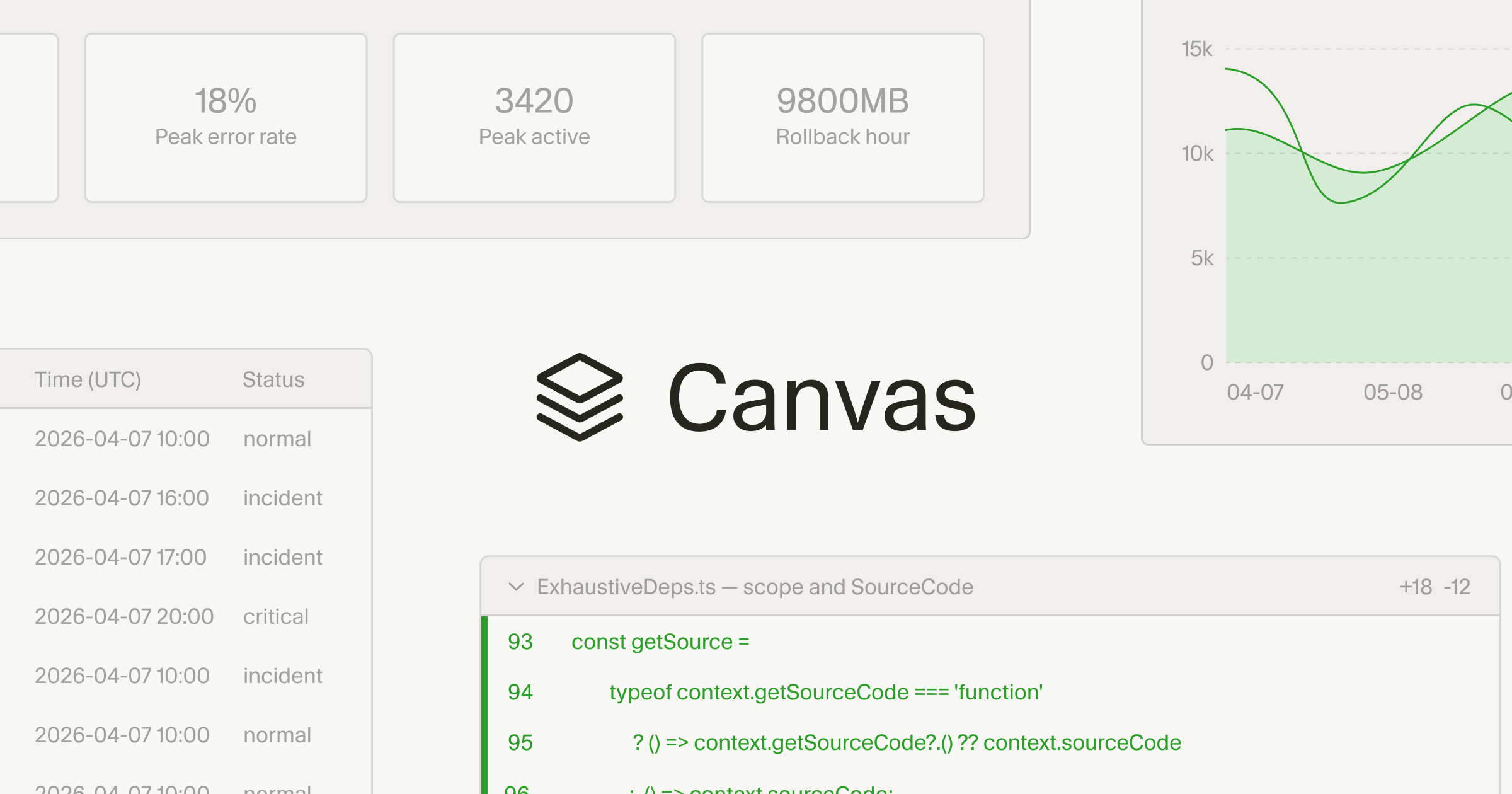

Boost Data Insights with Cursor Canvases

Tired of sifting through endless markdown tables or chat logs to understand your agent’s output? Cursor’s new canvases let you interact with agent-generated dashboards and custom interfaces—making data analysis and debugging much more intuitive.

What it is: Canvases are interactive, React-based dashboards that agents can generate directly in Cursor. They support rich components like tables, charts, diagrams, and even grouped code diffs.

How to use: When working with agents in Cursor (e.g., for PR reviews, incident response, or eval analysis), ask the agent to “create a canvas” for your data. You’ll get a visual, clickable interface instead of static text.

Why it’s useful: Canvases make it easy to spot patterns, cluster failures, and prioritize what matters—no more scrolling through walls of text. For example, you can quickly visualize time-series data from Datadog or group PR changes for faster reviews.

Key benefit: Increase your “information bandwidth” and reduce friction in human-agent collaboration. Canvases are durable artifacts that live alongside your other tools in Cursor.

Try canvases for any data-heavy task or when you want a more interactive agent experience.

We hope you liked our newsletter and you stay tuned for the next edition. If you need help with your AI tasks and implementations - let us know. We are happy to help